news from the future

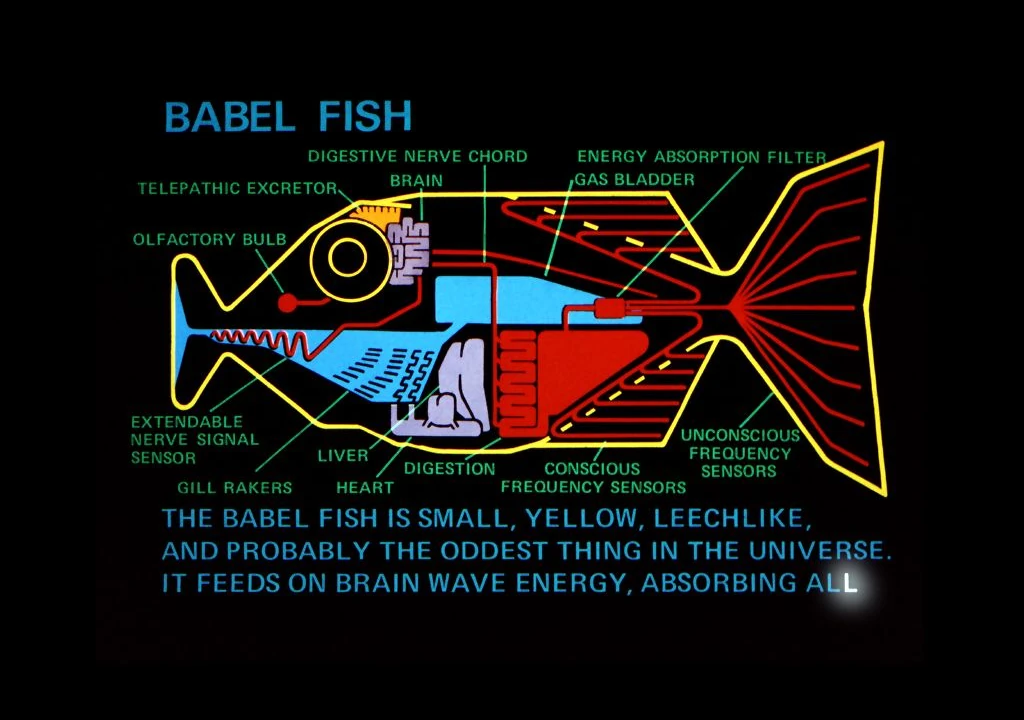

Everything Talks to Everything

AI opens up a world where technological mismatches and incompatibility are a thing of the past.

Don’t dismiss AI as a creative tool

I see more negative stories about AI than I do positive ones right now. That’s understandable. Concerns about copyright, jobs, environmental impact, weaponisation, security are all sensible. But we shouldn’t ignore the fact that this iteration of AI is also an incredibly powerful creative tool.

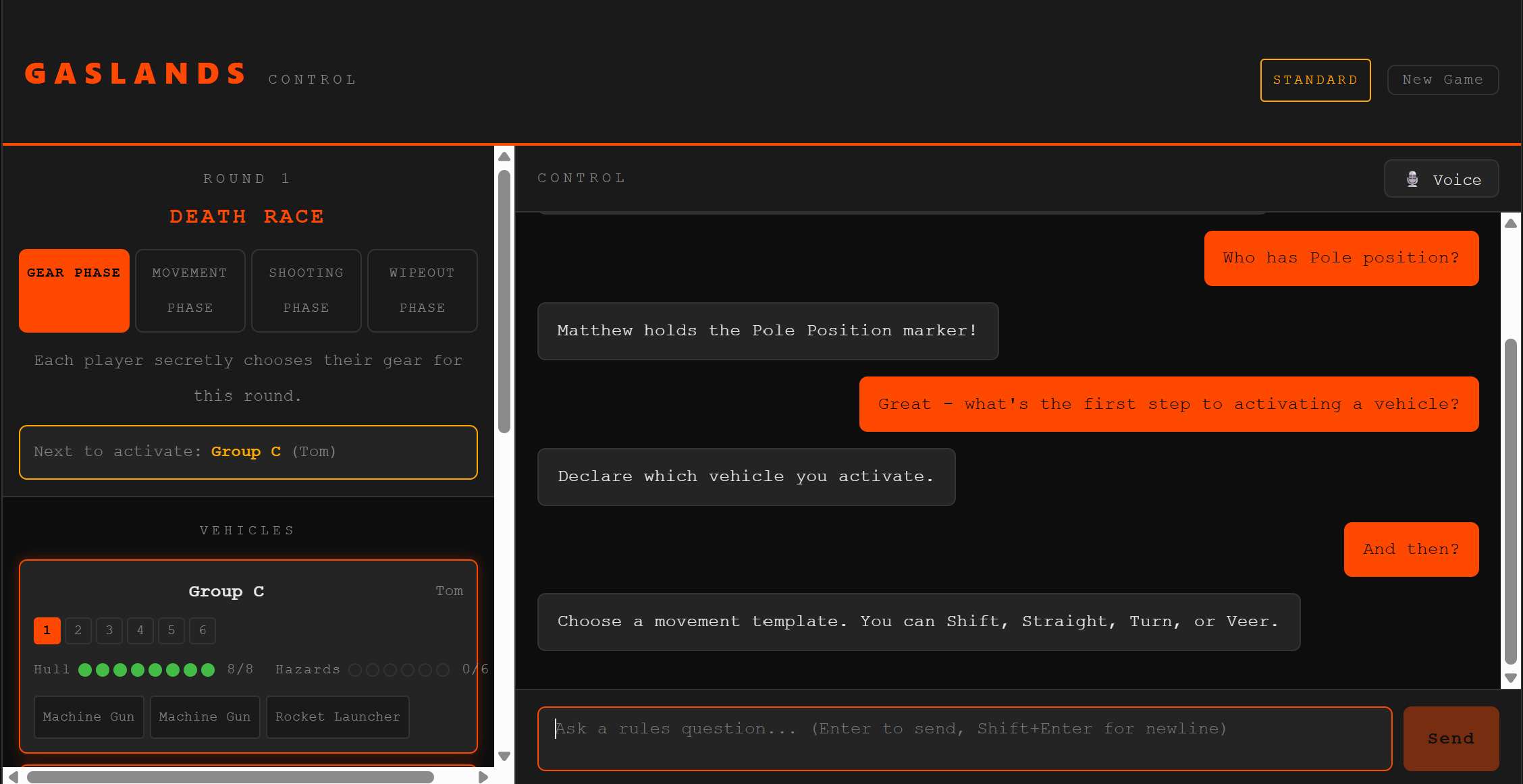

Tom OS: How AI transformed my workflow in a weekend

I’ve built a new workflow system to help me handle my busiest year yet. And thanks to AI, it only took me a weekend.

The Age of Quality

Future technology will not be obvious or flashy. It will be invisible, efficient, and ubiquitous. It will be enduring. Tomorrow’s technology might be one pillar of an age of quality.

The AI Opportunities Action Plan

What does the UK government’s new AI Opportunities Action Plan actually mean?

Not unusually, I find myself having to answer this question in a bit more depth than I might otherwise have done, and at short notice.

My 2025 Predictions

Today I’m recording the first of my annual radio chats making predictions for 2025. So it’s time that I review last year’s predictions as well.

- Future Car 4

- Future Communication 17

- Future Health 10

- Future Media 10

- Future Technology 19

- Future of Business 10

- Future of Cities 9

- Future of Education 7

- Future of Energy 8

- Future of Finance 19

- Future of Food 6

- Future of Housing 3

- Future of Humanity 22

- Future of Retail 9

- Future of Sport 1

- Future of Transport 9

- Future of Work 13

- Future society 12

- Futurism 20

- clippings 1

Archive Note

The archive of posts on this site has been somewhat condensed and edited, not always deliberately. This blog started all the way back in 2006 when working full time as a futurist was still a distant dream, and at one point numbered nearly 700 posts. There have been attempts to reduce replication, trim out some weaker posts, and tell more complete stories, but also some losses through multiple site moves - It has been hosted on Blogger, Wordpress, Medium, and now SquareSpace. The result is that dates and metadata on all the posts may not be accurate and many may be missing their original images.

You can search all of my posts through the search box, or click through some of the relevant categories. Purists can search my more complete archive here.